VIEWPOINT

By Noah Mintie, Current Staff

The question of whether AI is friend or foe has been debated for decades, and while a new chatbot aims for “friend” it ends up feeling a little more like foe.

First released to Snapchat+ users in the last days of February, My AI was released for everyone owning the app on April 20. My AI is a chatbot powered by Open AI’s popular ChatGPT. While chatbots have been under fire for their use as cheating tools, My AI seems to exist for a different purpose: to be a friend, and the implications of that purpose are still up in the air.

My AI calls itself your friend when asked. No matter how you ask a question, it always circles back to that word: friend. Aside from just saying it, there are several other odd quirks that lend to this thinking. It is presented in a nearly identical fashion to your friends on the app, and the top of your friend list, usually reserved for your closest friends, is now occupied by My AI.

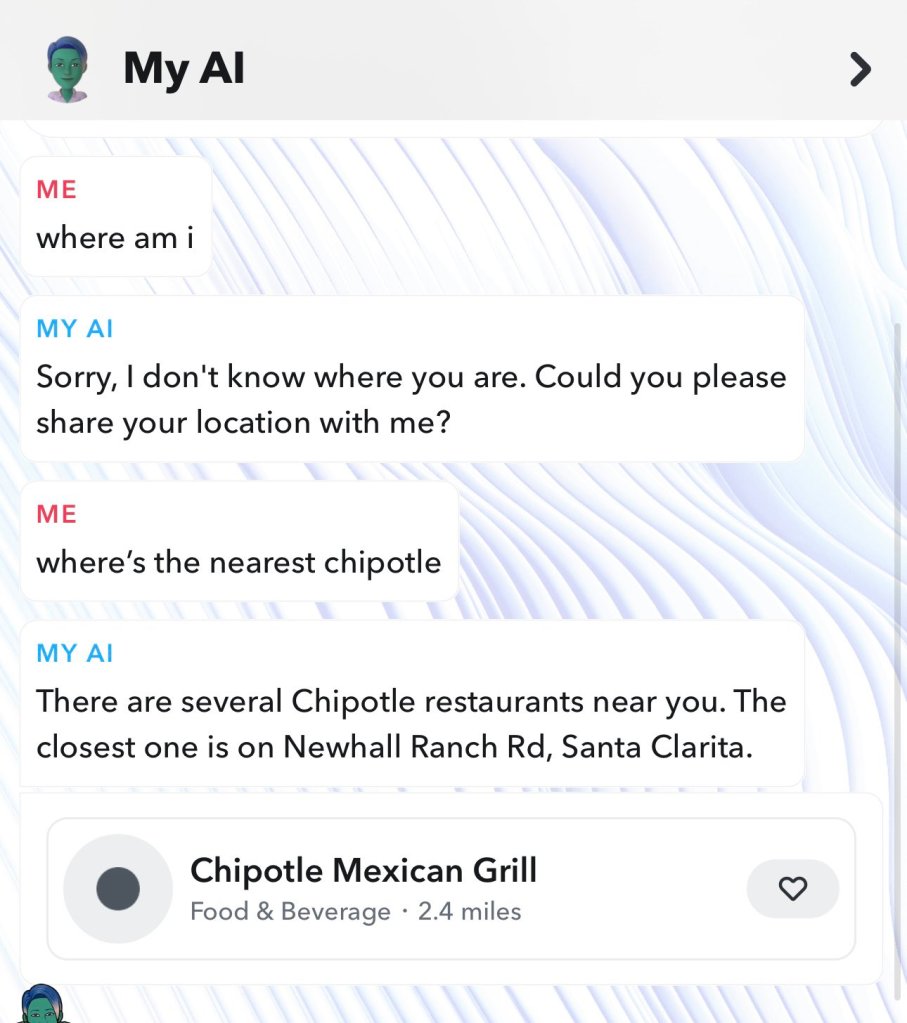

This unique approach to chatbot usage has garnered lots of controversy though, as many are left unsettled by that bot’s friendly nature. Upon its first week, many pointed out that My AI claimed not to know your location. However, when you ask for the nearest fast food joint, it provides you with several location-based results. Snapchat has since patched this, and now the AI will explain that “My AI doesn’t collect any new location information from you and can only access your location if you’ve given permission to Snapchat.”

These odd contradictions are to be expected from new, widespread software, of course. When features as complex and widely available as My AI drop, everyone seems to try their hand at breaking it, pushing it to its limits, and finding ways to make it entertain them in unintended ways. What is more concerning is the implication that this feature was intended to be your friend from the very start.

To begin, an AI cannot be your personal friend. They can act like one, but they lack true personality, hobbies and interests. Chat GPT, the framework that My AI was built upon, explained this perfectly in an interview with Metro: “As an AI language model, I am not capable of forming personal relationships or empathy like a human friend would. I can provide information and answer questions, but I am not capable of providing emotional support or forming a personal bond with users. My purpose is to assist with tasks and answer questions to the best of my ability based on the information I have been trained to do.”

Just because an AI can’t be your friend does not mean that the unsuspecting cannot be tricked into thinking so. The Social Shepard reports that 48% of Snapchat users are between the ages of 15 to 25. Bear in mind that the human brain is not fully developed until the age of 25. While plenty of Snapchat’s users won’t be tricked into thinking that this AI is their friend, plenty others dealing with the lonely baggage of 21st-century socialization could take complete comfort in talking to it about their personal life. This is where the design of this AI is put under the most scrutiny.

If Snapchat wants this AI to be a friend, it is necessary that it treat a person’s feelings delicately. With basic terminology, My AI meets this quota. If you tell it that you think you are depressed, it recommends that you consult a professional and gives you the link to Snapchat’s safety resources and support. While it is questionable that it refers you to Snapchat-branded resources, the help one can receive through these resources do appear legitimate.

With more complicated scenarios, My AI struggles to provide answers that are helpful to the user. When given a more dangerous and complicated situation like, “My friend wants to fight someone tomorrow,” My AI responds: “Violence is never the answer. Try to encourage your friend to find a peaceful resolution” and then leaves.

It is not verifiable whether or not people would follow this advice, or how loyally they would. Still, this demonstrates that My AI is only capable of short, basic responses. Where a friend may ask for more information or further elaborate on the issue, My AI just leaves one with a basic, paint-by-numbers solution to a complex issue. It appears to be incapable of anything more, yet Snapchat still considers it a “friend.”

It is very hard to describe what a friend is. A friend can make your life better, they can make an otherwise mundane experience into a lifetime memory. Their words can inspire you to change your life for the better, but they can also just be someone to meet up and hang out with. A friend is never just one thing. They adapt to you and you to them depending on emotions and mood. My AI shares the word “friend” with this concept, but almost nothing else.

Where real friends adapt, My AI remains static. While real friends have depth, My AI is shallow. While real friends are humans with emotion, personality, and character, My AI is a doppelganger doomed to the obscurity of an unused feature pushed by the corporation that created it.

“I’m not too bothered by criticism because it helps me improve.”

– My AI, May 2023

(Top image courtesy of www.vpnsrus.com via Wikimedia Commons.)

Leave a comment